The Empty Lot

AI for work is different than AI for learning. We need to act like it.

The second-most embarrassing thing I have ever done was to get super into DJing as a teenager.

I downloaded this DAW1 called ‘Reason’ off LimeWire on a Gateway-era PC running Windows 98, and I spent a solid chunk of tenth grade arranging bass-thumping nothing-tracks for zero-alcohol house parties I’d host in my parents’ attic. The tracks were not good. They were not not-good in the way that, say, an early Animal Collective B-side is not-good — e.g. interestingly, ambitiously, with whiffs of intention and some sort of ambition to assert something. They were instead the unlistenable doodlings of a Magic: the Gathering Junior Super Series competitor who had decided that the path to social legitimacy ran through a MIDI controller. I believe one such track sampled a duck call.

What I’ll tell you, though, is: I picked it up fast. Reason’s interface is complicated and modular and mimics a hardware rack of cables and routing buses and sends and inserts and side-chains, and I absorbed all of that the way you absorb the layout of your kitchen — through prolonged accidental contact, in the service of trying to do something else. Nobody taught me. Nobody had to. I was just trying to string a song together.

But the most embarrassing thing I’ve ever done was try to ride this hobby horse again twenty years later.

‘Reason’ is, by every reasonable2 measure, easier to use now than it was in 2002. The interface is cleaner. The samples are better. There are tutorials on YouTube made by patient men with calm voices and great beards who have not yet once ripped their hair out watching other grown-ass men fail to execute a click-and-drag.

None of it helped. My middle-aged man-brain was just like, ‘nah’. I calendar-blocked an entire evening to gaze lasers into my iMac like The Watcher until everything clicked, temple throbbing as I tried to Prometheus a track into existence — but I could physically feel my cognition glitch against the various navigations. Where was the sample library? Why couldn’t I just click on anything? None of the drop-downs so much as suggested a relationship with anything I even vaguely intended to do. God forbid I touch a knob.

I had become a guy who uses YouTube comments unironically. “Yo @Chrisreedbeats! Loved the vid. One Q: where’s the save button?”

Anyway, this is all I think about when I wade face-first into a Tweet thread about the proper role of AI in education.

You can’t really fade this stuff on the timeline.

My guy Tyler Austin Harper has been making the case in The Atlantic for what he calls the nuclear option — ban the laptops, jettison the phones, cut the wifi, drag the institution back into something that contains a floor, walls, ceiling, students, desks, and the occasional teacher all chilling there together in three-dimensional space. I am less skeptical than Tyler is, broadly. But the thing he lands, fairly, is that we cannot let these models into the classroom with zero reservation. It’d be like distilling moonshine in chemistry lab and shrugging when the kids start to help themselves to a beaker-ful.

This is hardly an isolated opinion. Anastasia Berg called the teacher-side accommodation of this moment “massive cope.” Andy Smarick, whose tweet I made you read a second ago, called it “the most obvious unforced error of our time.”

A different group, in a different room, is making roughly the opposite case. My friend Ian Temple, who runs a music-instruction site called Soundfly, has been pushing for AI tools built specifically for learning — ones that ask questions instead of reflexively answering them, that show pathways instead of conclusions. With more dollars and gasoline behind them are SF-coded folks at outfits like Synthesis and Alpha School, whose aim is to deliver every American child something like her own personalized Young Lady’s Illustrated Primer — the infinitely patient guide that meets her where she is, adapts to her interests, and assembles her, across the long arc of a well-attended childhood, into the best possible version of herself.

Both arguments hold up. But which of them is true depends on the nature of what learning is and how it actually happens inside the actual body and brain of an actual young person.

Picture your friendly neighborhood Barnes & Noble. The biggest sections, by floor space, are romance and self-help3. After that you have cookbooks, memoir, true crime, the inexplicable but evidently lucrative books-about-narwhals-aimed-at-third-graders, the sad gnarled dessicated rump of literary fiction, and a few full shelves dedicated to whichever financier most recently decided he had five leadership secrets beginning with the letter “S” to share.

You will not find a section called Five-Paragraph Essays. Nobody in the entire history of leisure reading has settled into bed with a glass of wine and said what I want tonight is a tightly-organized prose unit with an introduction, three body paragraphs, and a conclusion that restates the thesis. The most-taught genre of writing in the English-speaking world exists almost exclusively inside classrooms. After that, just like Keyser Soze, it’s gone.

I taught composition for a few years, and I had always hated five-paragraph essays. So my first year of teaching I just nobly let students write whatever shape made sense to them, gentle parenting, free-at-last-thank-God-almighty-etc.

The results were beautiful in patches but unreadable in aggregate. Kids with strong instincts produced strong work. But kids whose instincts had not yet been formed produced sentences that wandered off, and arguments that evaporated, and conclusions that failed to connect to any other string of words on the page.

I swallowed my pride and started assigning five-paragraph essays the very next year.

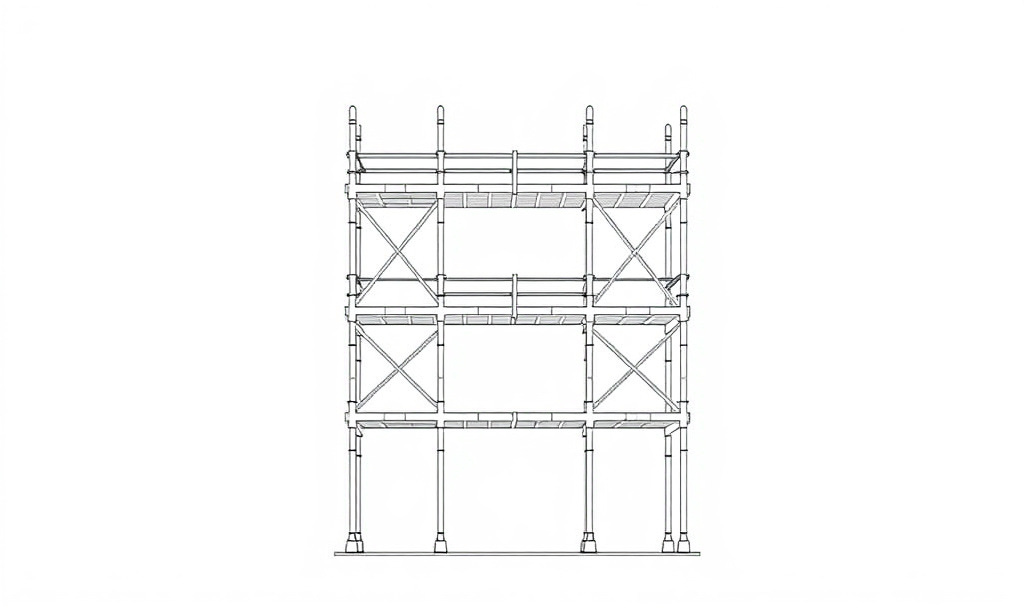

The five-paragraph essay is a scaffold, a training-wheels superstructure that forces a learner to notice that an argument has to be introduced, that supporting points have to be ordered, that transitions exist, and that a conclusion has to actually resolve a thesis. Once a writer has internalized their importance, the scaffolding can come down, since the job’s done.

But you can’t tear down a wall you’ve never built.

I learned the same lesson coaching college Mock Trial. We taught the kids a thing called CIRAC — Conclusion, Issue, Rule, Application, Conclusion — for handling objections in front of a judge. I, of course, hated CIRAC when I was a competitor, on the grounds that I was a Free Thinker and not bound by the binding constraints of <gestures vaguely>. After all, the judge needed to hear whatever brilliant thing I felt compelled to say.

But as a coach, I wouldn’t shut up about it, because the moment a student stopped CIRAC-ing, she would forget something important. Arguments became mere words. She would gesture at the issue and say a bunch of things; her voice would gradually trail off as her throat got dry; and the whole dance would conclude with the student gazing meekly at the judge as if to beg him to fill in the rest of the sentence for her. In the worst cases, the judge would indeed oblige.

CIRAC was not the goal. CIRAC was the noticing-machine that made sure the goal got hit.

The cognitive scientist Robert Bjork has spent thirty years documenting the underlying mechanism at play here. The preferred term of art is desirable difficulties. It turns out that conditions which make learning feel easy turn out, with depressing regularity, to produce worse long-term retention than conditions that feel slower and harder and more uncomfortable.

Friction, in other words, is not a bug. Rather, in many cases, it’s the causal mechanism by which learning happens. The same calculator plays a different role at every stage of a student’s mathematical development. In the early grades it exposes patterns. In the middle grades it checks work. In high school it performs operations now sufficiently-well-understood to outsource.

What’s happening is that the activity itself is changing as the schema underneath it solidifies. The National Council of Teachers of Mathematics — bless them — has been writing position papers about this exact distinction for decades. We know how to do this kind of thing (in theory anyway). We have been doing it for a hundred years in virtually every domain that has ever asked us to teach a young person anything.

But we are not yet doing it for AI.

The reason4 fifteen-year-old me could pick up Reason in an afternoon — and thirty-five-year-old me could not pick it up at all — is that fifteen-year-old me had a brain whose job, at that exact moment, was to absorb the underlying logic of any new system it encountered. Its role was to build, in real-time and beneath the level of conscious effort, the schema that an interface like Reason runs on top of. The cables and routing and sends and inserts were the architecture I was unconsciously assembling as consciously I tried to scrape a song together.

Thirty-five-year-old me didn’t have that brain anymore. That version of me had a brain that was comparatively good at executing against schema it had already built, but which had seriously taken an L when it came to building new schema from scratch. Reason assumed I’d built up the underlying mental model of how its world fits together. I hadn’t. I couldn’t anymore. The construction window for that particular kind of architecture had quietly closed sometime in my late twenties, and nothing about a calmer YouTube tutorial or a more colorful drop-down was going to reopen it.

What we are doing when we jam AI into education all willy-nilly is taking a tool that was designed to help adults execute against schema they have already built — to draft the memo faster, summarize the deposition automatically, answer the email without saying but anyway, so5— and dropping it into the hands of children whose schema are still under construction.

That short-circuits the entire process. The issue with the kid asking a chatbot to write his book report on Lord of the Flies has nothing to do with whether the paragraph is better or worse than what he would have written otherwise. Rather, it’s that the act of writing the paragraph was the only way the operating system for distilling an argument was ever going to get installed in his head. The artifact was always the byproduct. The activity was the point.

So when we take the activity away, there are big problems. The argument-distillation process never happens. The kid grows into an adult who can’t follow the case for a war, can’t tell whether the candidate is dodging the question, can’t read the fine print on his own thirty-year mortgage and notice what he’s being asked to agree to. None of it lands as missing, because how would he know? The scaffolding has been taken down on schedule. It’s just that what’s left is an empty lot.

I’ve argued before that we have a moral responsibility to deploy frontier AI against social and civic problems where it fits. But that responsibility cuts both ways — equally important is an evaluation of what fits where. And the activity of building the schema that a person will spend the rest of her life executing against is one whose mechanics are not negotiable. The friction itself is the juice. You can’t run a marathon without first putting in laps at the gym. You can’t collect $200 if you haven’t first passed Go.

The optimists are right that AI could be transformative for learning. It just has to be the right kind of AI. Because the pessimists are also right that something is being destroyed when we unleash frontier models into our classrooms like pathogens upon a virgin continent. The version of AI that is good at executing tasks faster — or, via automation, dodging the need to execute those tasks entirely — is wrong for the job in exactly the way a screwdriver is the wrong tool for a nail.

What people like Ian are pointing at — AI built to ask questions, to show pathways, to preserve and structure the friction rather than collapse it — would represent fundamentally a different tool. It would be a real innovation if we built it. But we haven’t gotten there quite yet.

Picture a fourteen-year-old who is somewhere in the middle of figuring out how to read a dense argument and volley one back. An AI tutor meets her at the edge of what she can almost do — asks her what she thinks before it tells her what it thinks, gives her real feedback in real time, calibrates the next challenge to be just barely harder than the last, adapts to what she is genuinely curious about. Forty years of motivation research from Edward Deci and Richard Ryan would predict, with confidence, that a tool like that would be one of the most powerful learning instruments anyone has ever built.

Now imagine the same fourteen-year-old, in a slightly different timeline, asking the off-the-shelf model to write her literary-analysis paragraph for her, and turning it in.

I still can’t DJ. I can live with this. The world will not particularly suffer for the loss of one more DJ D-Sol. But this girl here is not losing a hobby. She is losing the scaffolding she was supposed to be using to figure out who she is going to become.

Her brain is building itself right now. It will not be building itself forever. What she puts into it at this critical juncture determines whether her future self will be capable of all the things she has not yet discovered she will yearn for.

She can’t yet anticipate that future. But we can. And we should.

Digital Audio Workstation, which I’m footnoting here because I absolutely want to act like the kind of person who just intuitively knows what this acronym means, since this is arguably the first concrete payoff I’ve ever enjoyed for the truly insane amount of time I’ve put into this whole (ahem) “skill”.

My wife helps edit these and her GDoc comment here said “I am not going to even try to convince you to delete this.”

One great thing about being a Millennial is that I can unironically roll my eyes at this and simply act superior in an entirely unearned way.

I bet I can sneak this one in here. UPDATE: we did it.

“Thanks so much! Appreciate you, -Z”

I can’t stop thinking about this as well. Well written.